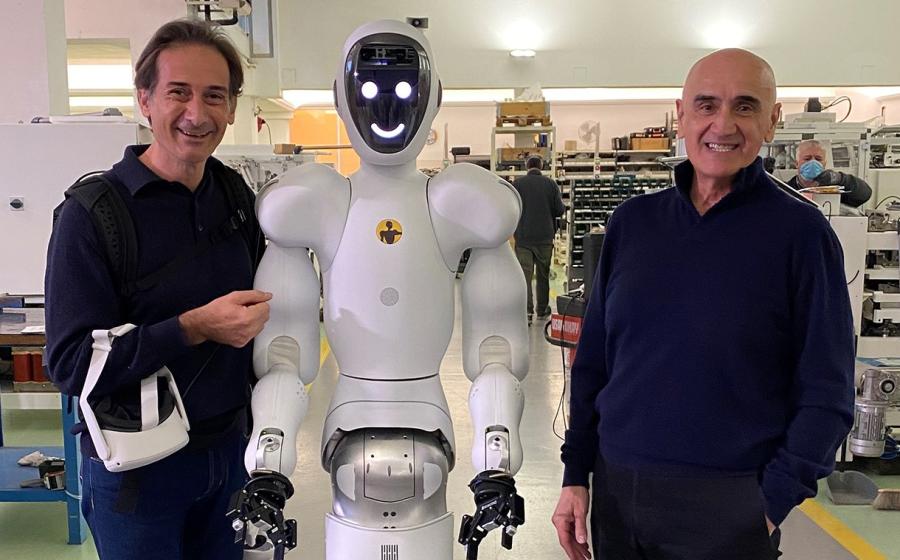

As part of the partnership, Altopack will become a minority shareholder in the Norwegian robot developer.

Halodi Robotics has developed a humanoid robot that performs pre-programmed tasks using artificial intelligence (AI). It moves using a set of two wheels and has movable arms to conduct work tasks.

Under the terms of the agreement, the companies will work closely together to develop and adapt the packing robot’s software. Part of the work will take place at Altopack’s development centre in Bologna.